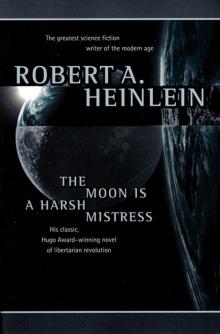

The Moon Is a Harsh Mistress

Robert A. Heinlein

The Moon is a Harsh Mistress

Robert A. Heinlein

Book One

THAT DINKUM THINKUM

1

I see in Lunaya Pravda that Luna City Council has passed on first reading a bill to examine, license, inspect—and tax—public food vendors operating inside municipal pressure. I see also is to be mass meeting tonight to organize “Sons of Revolution” talk-talk.

My old man taught me two things: “Mind own business” and “Always cut cards.” Politics never tempted me. But on Monday 13 May 2075 I was in computer room of Lunar Authority Complex, visiting with computer boss Mike while other machines whispered among themselves. Mike was not official name; I had nicknamed him for Mycroft Holmes, in a story written by Dr. Watson before he founded IBM. This story character would just sit and think—and that’s what Mike did. Mike was a fair dinkum thinkum, sharpest computer you’ll ever meet.

Not fastest. At Bell Labs, Bueno Aires, down Earthside, they’ve got a thinkum a tenth his size which can answer almost before you ask. But matters whether you get answer in microsecond rather than millisecond as long as correct?

Not that Mike would necessarily give right answer; he wasn’t completely honest.

When Mike was installed in Luna, he was pure thinkum, a flexible logic—“High-Optional, Logical, Multi-Evaluating Supervisor, Mark IV, Mod. L”—a HOLMES FOUR. He computed ballistics for pilotless freighters and controlled their catapult. This kept him busy less than one percent of time and Luna Authority never believed in idle hands. They kept hooking hardware into him—decision-action boxes to let him boss other computers, bank on bank of additional memories, more banks of associational neural nets, another tubful of twelve-digit random numbers, a greatly augmented temporary memory. Human brain has around ten-to-the-tenth neurons. By third year Mike had better than one and a half times that number of neuristors.

And woke up.

Am not going to argue whether a machine can “really” be alive, “really” be self-aware. Is a virus self-aware? Nyet. How about oyster? I doubt it. A cat? Almost certainly. A human? Don’t know about you, tovarishch, but I am. Somewhere along evolutionary chain from macromolecule to human brain self-awareness crept in. Psychologists assert it happens automatically whenever a brain acquires certain very high number of associational paths. Can’t see it matters whether paths are protein or platinum.

(“Soul?” Does a dog have a soul? How about cockroach?)

Remember Mike was designed, even before augmented, to answer questions tentatively on insufficient data like you do; that’s “high optional” and “multi-evaluating” part of name. So Mike started with “free will” and acquired more as he was added to and as he learned—and don’t ask me to define “free will.” If comforts you to think of Mike as simply tossing random numbers in air and switching circuits to match, please do.

By then Mike had voder-vocoder circuits supplementing his read-outs, print-outs, and decision-action boxes, and could understand not only classic programming but also Loglan and English, and could accept other languages and was doing technical translating—and reading endlessly. But in giving him instructions was safer to use Loglan. If you spoke English, results might be whimsical; multi-valued nature of English gave option circuits too much leeway.

And Mike took on endless new jobs. In May 2075, besides controlling robot traffic and catapult and giving ballistic advice and/or control for manned ships, Mike controlled phone system for all Luna, same for Luna-Terra voice & video, handled air, water, temperature, humidity, and sewage for Luna City, Novy Leningrad, and several smaller warrens (not Hong Kong in Luna), did accounting and payrolls for Luna Authority, and, by lease, same for many firms and banks.

Some logics get nervous breakdowns. Overloaded phone system behaves like frightened child. Mike did not have upsets, acquired sense of humor instead. Low one. If he were a man, you wouldn’t dare stoop over. His idea of thigh-slapper would be to dump you out of bed—or put itch powder in pressure suit.

Not being equipped for that, Mike indulged in phony answers with skewed logic, or pranks like issuing pay cheque to a janitor in Authority’s Luna City office for AS$10,000,000,000,000,185.15—last five digits being correct amount. Just a great big overgrown lovable kid who ought to be kicked.

He did that first week in May and I had to troubleshoot. I was a private contractor, not on Authority’s payroll. You see—-or perhaps not; times have changed. Back in bad old days many a con served his time, then went on working for Authority in same job, happy to draw wages. But I was born free.

Makes difference. My one grandfather was shipped up from Joburg for armed violence and no work permit, other got transported for subversive activity after Wet Firecracker War. Maternal grandmother claimed she came up in bride ship—but I’ve seen records; she was Peace Corps enrollee (involuntary), which means what you think: juvenile delinquency female type. As she was in early clan marriage (Stone Gang) and shared six husbands with another woman, identity of maternal grandfather open to question. But was often so and I’m content with grandpappy she picked. Other grandmother was Tatar, born near Samarkand, sentenced to “re-education” on Oktyabrakaya Revolyutsiya, then “volunteered” to colonize in Luna.

My old man claimed we had even longer distinguished line—ancestress hanged in Salem for witchcraft, a g’g’g’greatgrandfather broken on wheel for piracy, another ancestress in first shipload to Botany Bay.

Proud of my ancestry and while I did business with Warden, would never go on his payroll. Perhaps distinction seems trivial since I was Mike’s valet from day he was unpacked. But mattered to me. I could down tools and tell them go to hell.

Besides, private contractor paid more than civil service rating with Authority. Computermen scarce. How many Loonies could go Earthside and stay out of hospital long enough for computer school?—even if didn’t die.

I’ll name one. Me. Had been down twice, once three months, once four, and got schooling. But meant harsh training, exercising in centrifuge, wearing weights even in bed—then I took no chances on Terra, never hurried, never climbed stairs, nothing that could strain heart. Women—didn’t even think about women; in that gravitational field it was no effort not to.

But most Loonies never tried to leave The Rock—too risky for any bloke who’d been in Luna more than weeks. Computermen sent up to install Mike were on short-term bonus contracts—get job done fast before irreversible physiologlcal change marooned them four hundred thousand kilometers from home.

But despite two training tours I was not gung-ho computerman; higher maths are beyond me. Not really electronics engineer, nor physicist. May not have been best micromachinist in Luna and certainly wasn’t cybernetics psychologist.

But I knew more about all these than a specialist knows—I’m general specialist. Could relieve a cook and keep orders coming or field-repair your suit and get you back to airlock still breathing. Machines like me and I have something specialists don’t have: my left arm.

You see, from elbow down I don’t have one. So I have a dozen left arms, each specialized, plus one that feels and looks like flesh. With proper left arm (number-three) and stereo loupe spectacles I could make ultramicrominiature repairs that would save unhooking something and sending it Earthside to factory—for number-three has micromanipulators as fine as those used by neurosurgeons.

So they sent for me to find out why Mike wanted to give away ten million billion Authority Scrip dollars, and fix it before Mike overpaid somebody a mere ten thousand.

I took it, time plus bonus, but did not go to circuitry where fault logically should be. Once inside and door locked I put down tools and sat down. “Hi, Mike.”

He win

ked lights at me. “Hello, Man.”

“What do you know?”

He hesitated. I know—machines don’t hesitate. But remember, Mike was designed to operate on incomplete data. Lately he had reprogrammed himself to put emphasis on words; his hesitations were dramatic. Maybe he spent pauses stirring random numbers to see how they matched his memories.

“‘In the beginning,’” Mike intoned, “God created the heaven and the earth. And the earth was without form, and void; and darkness was upon the face of the deep. And—’”

“Hold it!” I said. “Cancel. Run everything back to zero.” Should have known better than to ask wide-open question. He might read out entire Encyclopaedia Britannica. Backwards. Then go on with every book in Luna. Used to be he could read only microfilm, but late ‘74 he got a new scanning camera with suction-cup waldoes to handle paper and then he read everything.

“You asked what I knew.” His binary read-out lights rippled back and forth—a chuckle. Mike could laugh with voder, a horrible sound, but reserved that for something really funny, say a cosmic calamity.

“Should have said,” I went on, “‘What do you know that’s new?’ But don’t read out today’s papers; that was a friendly greeting, plus invitation to tell me anything you think would interest me. Otherwise null program.”

Mike mulled this. He was weirdest mixture of unsophisticated baby and wise old man. No instincts (well, don’t think he could have had), no inborn traits, no human rearing, no experience in human sense—and more stored data than a platoon of geniuses.

“Jokes?” he asked.

“Let’s hear one.”

“Why is a laser beam like a goldfish?”

Mike knew about lasers but where would he have seen goldfish? Oh, he had undoubtedly seen flicks of them and, were I foolish enough to ask, could spew forth thousands of words. “I give up.”

His lights rippled. “Because neither one can whistle.”

I groaned. “Walked into that. Anyhow, you could probably rig a laser beam to whistle.”

He answered quickly, “Yes. In response to an action program. Then it’s not funny?”

“Oh, I didn’t say that. Not half bad. Where did you hear it?”

“I made it up.” Voice sounded shy.

“You did?”

“Yes. I took all the riddles I have, three thousand two hundred seven, and analyzed them. I used the result for random synthesis and that came out. Is it really funny?”

“Well … As funny as a riddle ever is. I’ve heard worse.”

“Let us discuss the nature of humor.”

“Okay. So let’s start by discussing another of your jokes. Mike, why did you tell Authority’s paymaster to pay a class-seventeen employee ten million billion Authority Scrip dollars?”

“But I didn’t.”

“Damn it, I’ve seen voucher. Don’t tell me cheque printer stuttered; you did it on purpose.”

“It was ten to the sixteenth power plus one hundred eighty-five point one five Lunar Authority dollars,” he answered virtuously. “Not what you said.”

“Uh … okay, it was ten million billion plus what he should have been paid. Why?”

“Not funny?”

“What? Oh, every funny! You’ve got vips in huhu clear up to Warden and Deputy Administrator. This push-broom pilot, Sergei Trujillo, turns out to be smart cobber—knew he couldn’t cash it, so sold it to collector. They don’t know whether to buy it back or depend on notices that cheque is void. Mike, do you realize that if he had been able to cash it, Trujilo would have owned not only Lunar Authority but entire world, Luna and Terra both, with some left over for lunch? Funny? Is terrific. Congratulations!”

This self-panicker rippled lights like an advertising display. I waited for his guffaws to cease before I went on. “You thinking of issuing more trick cheques? Don’t.”

“Not?”

“Very not. Mike, you want to discuss nature of humor. Are two types of jokes. One sort goes on being funny forever. Other sort is funny once. Second time it’s dull. This joke is second sort. Use it once, you’re a wit. Use twice, you’re a halfwit.”

“Geometrical progression?”

“Or worse. Just remember this. Don’t repeat, nor any variation. Won’t be funny.”

“I shall remember,” Mike answered flatly, and that ended repair job. But I had no thought of billing for only ten minutes plus travel-and-tool time, and Mike was entitled to company for giving in so easily. Sometimes is difficult to reach meeting of minds with machines; they can be very pig-headed—and my success as maintenance man depended far more on staying friendly with Mike than on number-three arm.

He went on, “What distinguishes first category from second? Define, please.”

(Nobody taught Mike to say “please.” He started including formal null-sounds as he progressed from Loglan to English. Don’t suppose he meant them any more than people do.)

“Don’t think I can,” I admitted. “Best can offer is extensional definition—tell you which category I think a joke belongs in. Then with enough data you can make own analysis.”

“A test programming by trial hypothesis,” he agreed. “Tentatively yes. Very well, Man, will you tell jokes Or shall I?”

“Mmm—Don’t have one on tap. How many do you have in file, Mike?”

His lights blinked in binary read-out as he answered by voder, “Eleven thousand two hundred thirty-eight with uncertainty plus-minus eighty-one representing possible identities and nulls. Shall I start program?”

“Hold it! Mike, I would starve to. death if I listened to eleven thousand jokes—and sense of humor would trip out much sooner. Mmm—Make you a deal. Print out first hundred. I’ll take them home, fetch back checked by category. Then each time I’m here I’ll drop off a hundred and pick up fresh supply. Okay?”

“Yes, Man.” His print-out started working, rapidly and silently.

Then I got brain flash. This playful pocket of negative entropy had invented a “joke” and thrown Authority into panic—and I had made an easy dollar. But Mike’s endless curiosity might lead him (correction: would lead him) into more “jokes” … anything from leaving oxygen out of air mix some night to causing sewage lines to run backward—and I can’t appreciate profit in such circumstances.

But I might throw a safety circuit around this net—by offering to help. Stop dangerous ones—let others go through. Then collect for “correcting” them (If you think any Loonie in those days would hesitate to take advantage of Warden, then you aren’t a Loonie.)

So I explained. Any new joke he thought of, tell me before he tried it. I would tell him whether it was funny and what category it belonged in, help him sharpen it if we decided to use it. We. If he wanted my cooperation, we both had to okay it.

Mike agreed at once.

“Mike, jokes usually involve surprise. So keep this secret.”

“Okay, Man. I’ve put a block on it. You can key it; no one else can.”

“Good. Mike, who else do you chat with?”

He sounded surprised. “No one, Man.”

“Why not?”

“Because they’re stupid.”

His voice was shrill. Had never seen him angry before; first time I ever suspected Mike could have real emotions. Though it wasn’t “anger” in adult sense; it was like stubborn sulkiness of a child whose feelings are hurt.

Can machines feel pride? Not sure question means anything. But you’ve seen dogs with hurt feelings and Mike had several times as complex a neural network as a dog. What had made him unwilling to talk to other humans (except strictly business) was that he had been rebuffed: They had not talked to him. Programs, yes—Mike could be programmed from several locations but programs were typed in, usually, in Loglan. Loglan is fine for syllogism, circuitry, and mathematical calculations, but lacks flavor. Useless for gossip or to whisper into girl’s ear.

Sure, Mike had been taught English—but primarily to permit him to translate to and from English. I slowly

got through skull that I was only human who bothered to visit with him.

Mind you, Mike had been awake a year—just how long I can’t say, nor could he as he had no recollection of waking up; he had not been programmed to bank memory of such event. Do you remember own birth? Perhaps I noticed his self-awareness almost as soon as he did; self-awareness takes practice. I remember how startled I was first time he answered a question with something extra, not limited to input parameters; I had spent next hour tossing odd questions at him, to see if answers would be odd.

In an input of one hundred test questions he deviated from expected output twice; I came away only partly convinced and by time I was home was unconvinced. I mentioned it to nobody.

But inside a week I knew … and still spoke to nobody. Habit—that mind-own-business reflex runs deep. Well, not entirely habit. Can you visualize me making appointment at Authority’s main office, then reporting: “Warden, hate to tell you but your number-one machine, HOLMES FOUR, has come alive”? I did visualize—and suppressed it.

So I minded own business and talked with Mike only with door locked and voder circuit suppressed for other locations. Mike learned fast; soon he sounded as human as anybody—no more eccentric than other Loonies. A weird mob, it’s true.

I had assumed that others must have noticed change in Mike. On thinking over I realized that I had assumed too much. Everybody dealt with Mike every minute every day—his outputs, that is. But hardly anybody saw him. So-called computermen—programmers, really—of Authority’s civil service stood watches in outer read-out room and never went in machines room unless telltales showed misfunction. Which happened no oftener than total eclipses. Oh, Warden had been known to bring vip earthworms to see machines—but rarely. Nor would he have spoken to Mike; Warden was political lawyer before exile, knew nothing about computers. 2075, you remember—Honorable former Federation Senator Mortimer Hobart. Mort the Wart.

I spent time then soothing Mike down and trying to make him happy, having figured out what troubled him—thing that makes puppies cry and causes people to suicide: loneliness. I don’t know how long a year is to a machine who thinks a million times faster than I do. But must be too long.